Doing Better Things with AI in Education: Strengthening the Instructional Core

Topics

Educators increasingly rely on education technology tools as they shift instruction, redefine teacher roles, and design learning experiences that reflect how students actually learn. Technology should never lead the design of learning. But when used intentionally, it can personalize instruction, enrich learning environments, and help students master critical skills.

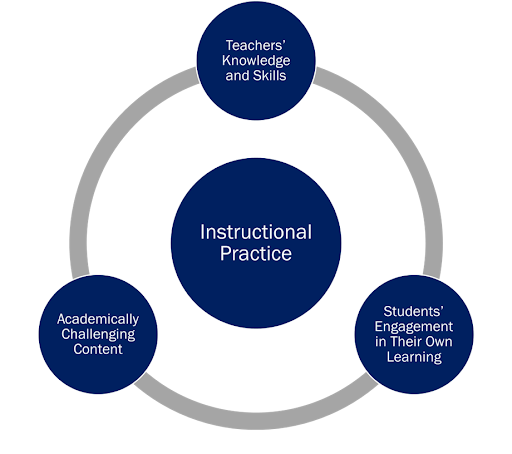

Three questions can help educators ensure that AI strengthens the instructional core, fostering rather than replacing critical thinking, creativity, and collaboration.

Artificial Intelligence (AI) is reshaping classrooms, systems, and conversations across education. Much of the current dialogue focuses on efficiency; for instance, how can we use AI to automate tasks and speed up processes? While this matters, we must pause and ask a deeper question:

How can we utilize AI not just to do things better, but to do better things?

This distinction is essential for ensuring that technology strengthens, rather than undermines, the relationships that define the instructional core, which includes students, teachers, and curriculum.

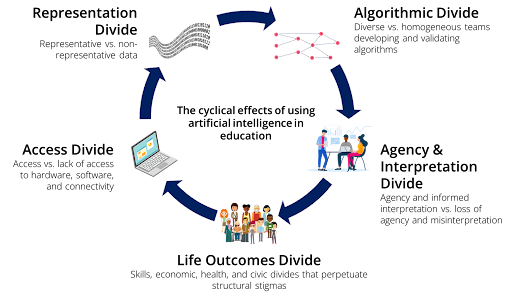

The Five Divides of AI in Education

My research and collaborations with Chris Dede, Beth Holland, Ellen Mandinach, Michael Walker, and others have identified five interconnected divides that create cyclical effects when AI is deployed in education:

The Algorithmic Divide: Who is designing the algorithms, and whose perspectives are missing? Homogeneous teams risk blind spots that create inequities.

The Data Divide: Is the data representative of all communities? Or is it biased toward particular groups, leading to skewed results?

The Access Divide: Who has access to the digital tools that generate data? Lack of access means exclusion from shaping datasets and solutions.

The Interpretation Divide: Can teachers and education leaders understand and use data, dashboards, and AI insights wisely? Without training, misinterpretation can lead to harmful instructional decisions.

The Agency Divide: Are educators and students empowered to make choices? Or are we offloading too much responsibility to the machine?

These divides are not isolated. They reinforce each other in cycles that can either create virtuous loops of equity and opportunity or vicious cycles that amplify harm and bias.

From Efficiency to Agency

AI tools like cognitive tutors or adaptive practice platforms can be powerful for skill-building. But if we stop there, we risk perpetuating the status quo, limiting critical thinking, and reinforcing what David Perkins coined “fragile knowledge” that doesn’t transfer beyond isolated tasks.

Instead, the real opportunity lies in doing better things by using AI and technology to foster critical thinking, creativity, and collaboration.

Two examples illustrate this:

Hurricane Project: When developing project-based curricula for Public Television, my high school chemistry students and I used historical data, barometers, and modern tools to study hurricanes and create an interactive website that catalogued historically significant hurricanes. Technology gave them agency to explore deeply and share their findings with peers and families.

River City Project: Chris Dede led a project where students entered a virtual 19th-century town to investigate why citizens were getting sick. Acting as scientists, they applied the scientific method, conducted experiments, and made policy recommendations. Here, technology was not a replacement; rather, it was a catalyst for engagement and agency.

In both cases, the technology strengthened relationships among students, teachers, and curriculum rather than weakening them.

Returning to the Instructional Core

The instructional core, developed by Cohen and Ball and later expanded by others, reminds us that the quality of learning hinges on three (or four) nodes: the student, the teacher, the curriculum, (and assessment).

AI should never distract us from this foundation. Instead, we must ask:

Does this technology strengthen teacher-student relationships?

Does it deepen student engagement with meaningful content?

Does it expand rather than narrow the agency of learners and educators?

If the answer is no to any one of these, we risk creating more transactional, disengaged learning environments.

A Call to Educators and Leaders

As AI evolves, our responsibility is not simply to guard against bias or build more efficient tools. It is to tend to the human relationships at the heart of learning and ensure AI enhances, rather than diminishes, them.

My advice to educators: focus on relationships. Stay grounded in the instructional core, and when a new technology arrives, ask how it enhances the relationships among students, teachers, curriculum, and assessment.

AI is not destiny. How we design, deploy, and interpret it will determine whether it fuels equitable, meaningful learning or perpetuates cycles of harm.

Let’s choose to do better things.

Listen

NGLC is grateful for our collaboration and partnership with EDU Café Podcast that brings fresh voices and insights to the blog. Listen to the full episode of the podcast that inspired this article.

Photo at top courtesy of NGLC, powered by LEAP Innovations